Receiving artifacts from GCS

This functionality uses Google’s Pub/Sub system for delivering messages to Spinnaker and must be configured to send messages to Spinnaker’s event bus as shown below.

Prerequisites

If you or your Spinnaker admin have already configured Spinnaker to listen to Pub/Sub messages from the GCS bucket you plan to publish objects to, you can skip this section. One Pub/Sub subscription can be used to trigger as many independent Spinnaker pipelines as needed.

You need the following:

A billing enabled Google Cloud Platform (GCP) project .

This will be referred to as

$PROJECTfrom now on.gcloud . Make sure to run

gcloud auth loginif you have installedgcloudfor the first time.A running Spinnaker instance . This guide shows you how to configure an existing one to accept GCS messages and download the files referenced by the messages in your pipelines.

Artifact support enabled .

At this point, we will configure Pub/Sub and a GCS artifact account. The Pub/Sub messages will be received by Spinnaker whenever a file is uploaded or changed, and the artifact account will allow you to download these where necessary.

1. Configure Google Pub/Sub for GCS

Follow the Pub/Sub configuration , in particular, pay attention to the GCS section since this is where we’ll be publishing our files to.

2. Configure a GCS artifact account

Follow the GCS artifact configuration .

3. Apply your configuration changes

Once the Pub/Sub and artifact changes have been made using Halyard, run

hal deploy apply

to apply them in Spinnaker.

Using GCS artifacts in pipelines

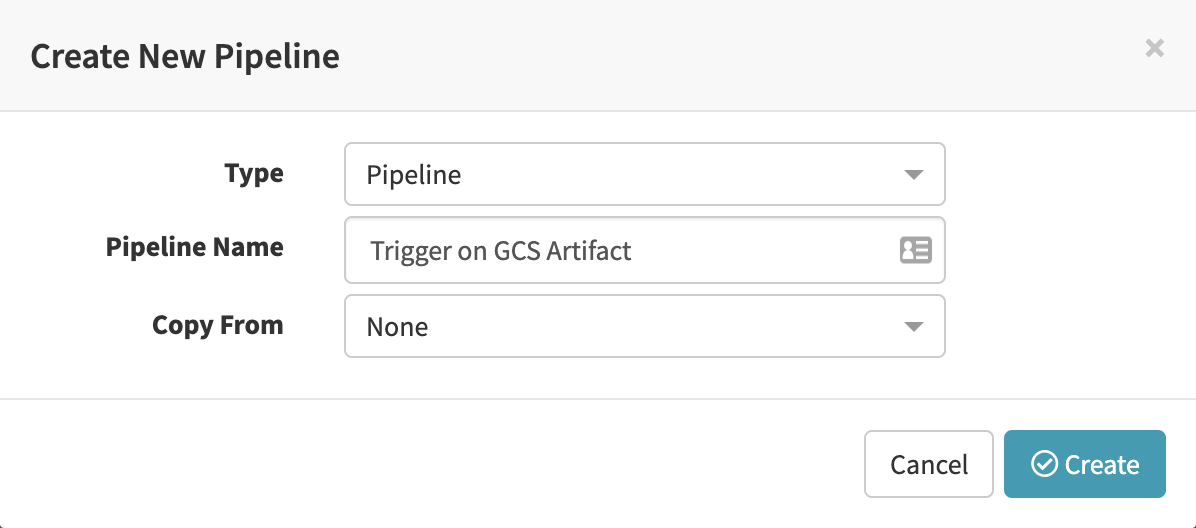

We will need either an existing or a new pipeline that we want to be triggered on changes to GCS artifacts. If you do not have a pipeline, create one as shown below.

You can create and edit pipelines in the Pipelines tab of Spinnaker

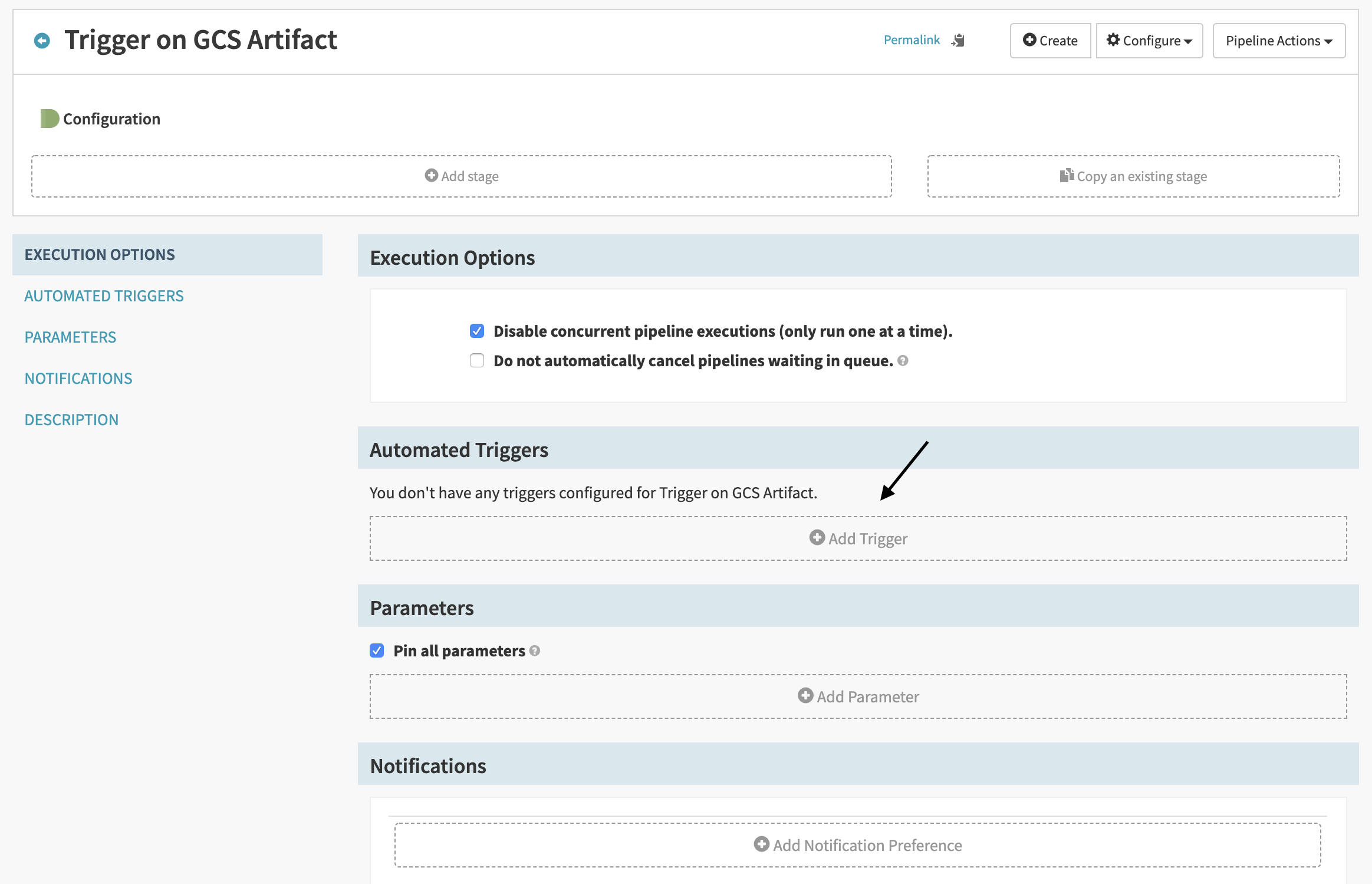

Configure the GCS trigger

Let’s add a Pub/Sub trigger to run our pipeline.

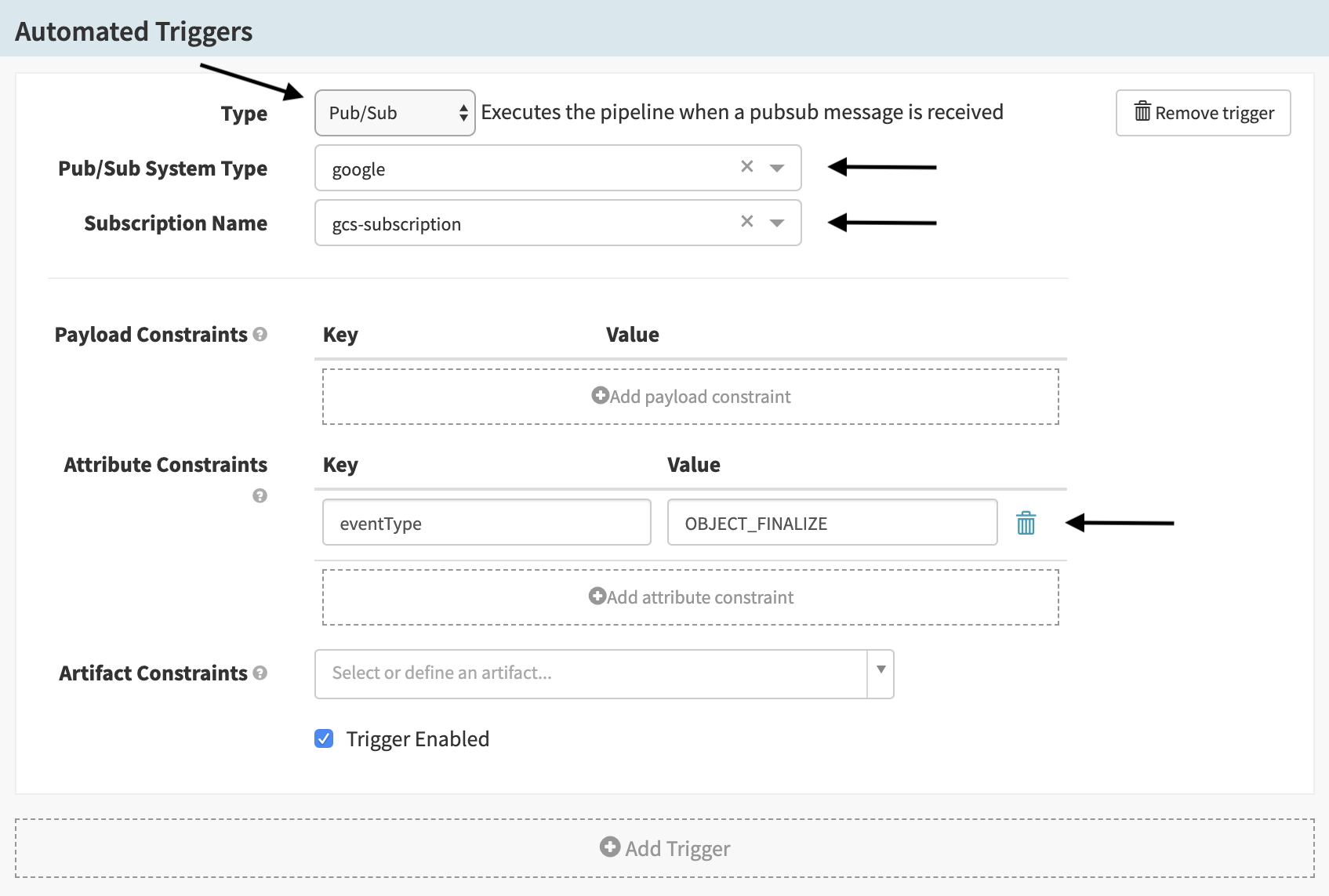

Next, we must configure the trigger:

| Field | Value |

|---|---|

| Type | “Pub/Sub” |

| Pub/Sub System Type | “Google” |

| Subscription Name | Depends on your Pub/Sub configuration (from Halyard |

| Attribute Constraints | Must be configured to include the pair eventType:OBJECT_FINALIZE (See the

docs

) |

| Expected Artifacts | Must reference the artifact defined previously |

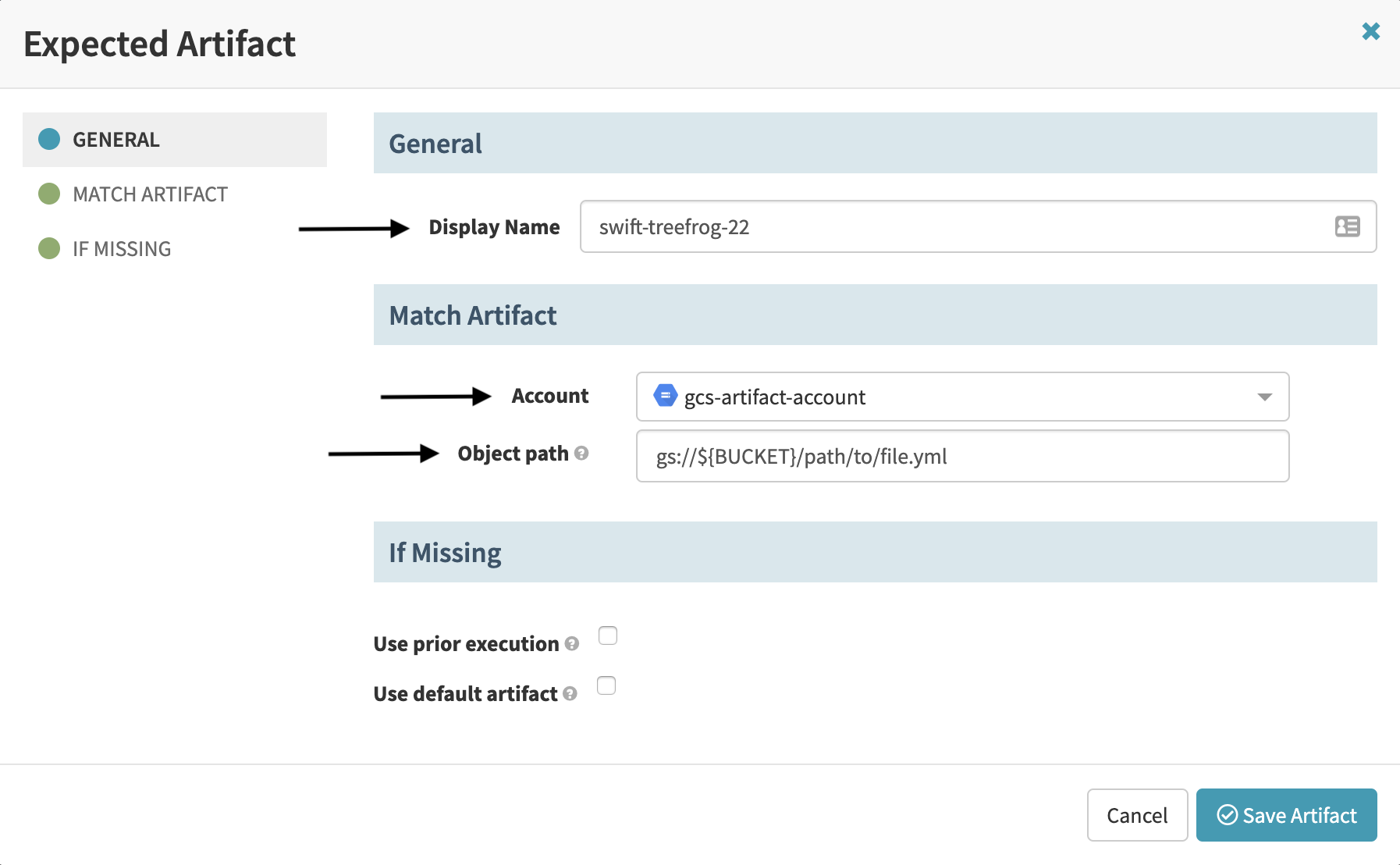

Configure the GCS artifact

Now we need to declare that the Pub/Sub trigger expects that a specific artifact matching some criteria is available before the pipeline starts executing. In doing so, you guarantee that an artifact matching your description is present in the pipeline’s execution context. If no artifact for this description is present, the pipeline won’t start.

To configure the artifact, go to the Artifact Constraints dropdown for the Pub/Sub trigger configuration, and select “Define a new artifact…" to bring up the Expected Artifact form.

Enter a Display Name, or leave the autogenerated default. In the Account

dropdown, select your GCS account. Enter the fully qualified GCS path in the

Object path field. Note: this path can be a regular expression. You can, for

example, set

the object path to be gs://${BUCKET}/folder/.* to trigger on any change to an

object inside folder in your ${BUCKET}.

${BUCKET} is a placeholder for the GCS bucket name that you have configured to receive Pub/Sub messages from above.

Test the pipeline

If you upload a file to a path matching your configured Object path, the pipeline should execute. If it doesn’t, you can start by checking the logs in the Echo service.